There’s a lot to unpack with all of the storage related announcements at VMware Explore. You can easily tell why vSAN still has the lion’s share in the HCI space at 40% of the market. With announcements like vSAN ESA last year, it’s no surprise VMware is on top. Now there’s further advancements in the newly announced vSAN 8 Update 2 and the announcement of vSAN Max.

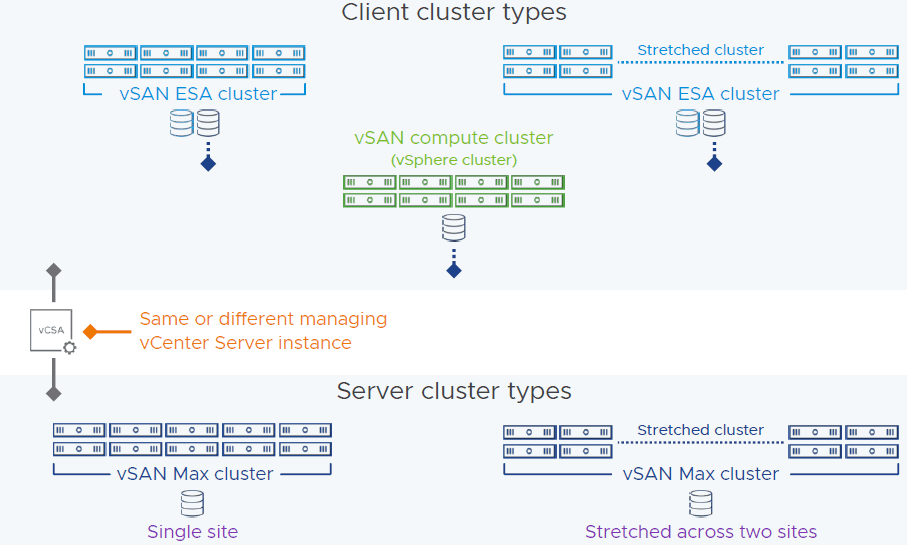

Let’s dig into the flexibility side. vSAN Max is disaggregated storage, which means that you can have a cluster with only compute hosts access vSAN storage from a dedicated storage cluster. This isn’t something hobbled together with iSCSI or NFS. This is an all native vSAN stack. For example, you can have a regular vSAN cluster purely on blades without drives and then the storage comes from a vSAN Max cluster. Could have this similarly before, but this is now optimized. Now up to 200% higher write throughput and up to 70% reduction in latency. vSAN Max makes it easier to add more storage by simply adding a new storage host. vSAN Max can be used in a stretched cluster on the storage and compute side.

Deploying a vSAN cluster will now give three options; vSAN HCI, vSAN Compute Cluster, and vSAN Max. Then you can select if a stretched cluster or not. On the compute cluster side, just a couple of selections needed and then can mount the remote datastore. You can check out the performance for vSAN Max and connected clusters on one dashboard. There is no support for in place upgrades from all existing vSAN editions and those existing licenses cannot be used for vSAN Max. It will use a new per-TiB licensing model.

Now let’s switch gears to core platform advances. There is now feature parity with with vSAN file services in ESA and OSA. That means file services, such as NFS and SMB, are available in ESA. Also, ESA is now supported in VCF 5.1 along with vSAN Max.

There have been a lot of performance improvements with ESA. Users should expect higher throughput and lower latency on write intensive workloads. DBAs will love this.

ESA can now support up to 500 VMs per host. That’s a big jump from the previous limit of 200 VMs. This is great news for VDI clusters.

ESA can now support much lower specs for a ReadyNode. This is as low as 16 cores, 128GB of memory, 10 GB network, and 3.2TB of storage per host. You will still get the benefit of the new snapshots, smaller failure domains, and improved efficiency to drive down costs. This should make ESA more appealing in small deployments.

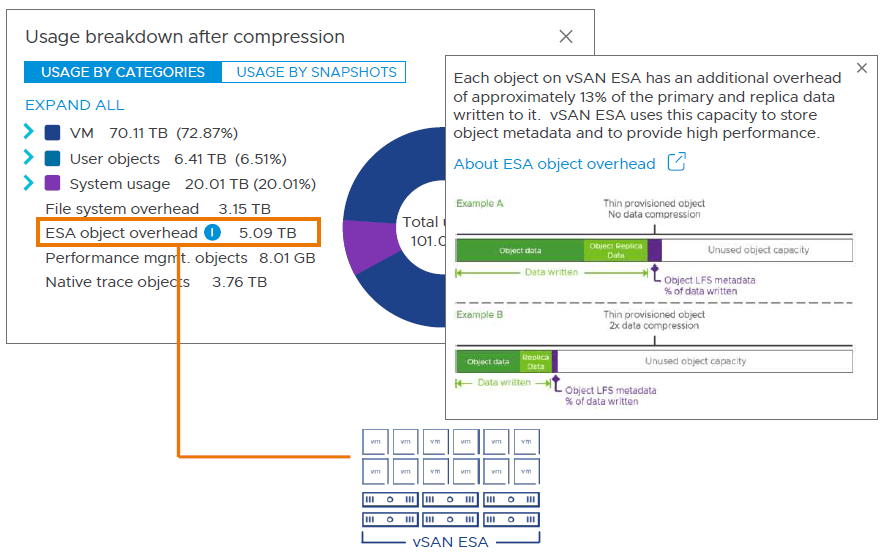

I will now wrap up with enhanced management. It’s now more straightforward to view capacity overheads in the UI. There’s now support for the key expiration standard with a KMIP based v1.2 KMS. The key expiration attribute is for OSA and ESA. It’s integrated with Skyline Health so you will find the warnings in that area. Now there are more options for recommendations on troubleshooting an issue. For example, there will be a default option and an alternative. If you have a finding, there is improved information on the risk of not remediating. It accounts for the specific version of vSAN that you are running. Also, you can now manage the lifecycle of all types of witness appliances in vLCM. These are improvements available in OSA and ESA.

There are also other improvements and features that I didn’t mention in this article so be sure to check out everything in VMware’s release notes. Put this all together and it’s a straightforward decision to go with ESA on new deployments with all of the new features and enhancements, but makes sense to keep using OSA if using legacy hardware. I am looking forward to implementing ESA.